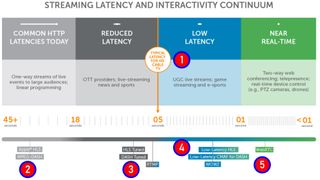

Low latency is a universal aspiration in media. When a company like Wowza produces the perfect chart to explain low-latency streaming technologies, you have to take your hat off to them, and use the chart, with attribution, and some minor modifications. I present said chart as Figure 1; let’s discuss in the order designated by the highlighted numbers which I’ve added.

1. Applications for Low Latency

Just to make sure we’re all on the same page, latency in the context of live streaming means the glass-to-glass delay. The first glass is the camera at the actual live event, the second is the screen you’re watching. Latency is the delay between when the appears in the camera and when it shows up on your phone. Contributing to latency are factors like encoding time (at the event), transport time to the cloud, transcoding time in the cloud (to create the encoding ladder), delivery time, and critically, how many seconds your player buffers before starting to play.

The top layer shows typical applications and their latency requirements. Popular applications missing from low latency and near real time latency are gambling and auction sites.

Before diving into our technology discussion, understand that the lower the latency of your live stream, the less resilient the stream is to bandwidth interruptions. For example, using default settings, an HLS stream will play through 15+ seconds of interrupted bandwidth, and if it’s restored at that point, the viewer may never know there was a problem. In contrast, a low-latency stream will stop playing almost immediately after an interruption. So, the benefit of low-latency startup time always needs to be balanced against the negative of stoppages in playback. If you don’t absolutely need low latency it may not be worth sacrificing resiliency to get it.

That said, it’s useful to divide latency into three categories, as follows.

Audio + Video + IT. Our editors are experts in integrating audio/video and IT. Get daily insights, news, and professional networking. Subscribe to Pro AV Today.

- Nice to have - Faster is always better, but if you’re live-streaming a Board of Education meeting or high-school football game, you may decide that stream robustness is more important than low latency, particularly if many viewers are watching on low bitrate connections.

- Competitive advantage - In some instances, low-latency provides a competitive advantage, or slow latency means a competitive disadvantage. You’ll note in the chart that the typical latency for cable TV is around five-seconds. If your streaming service is competing against cable, you need to be in that range, with lower-latency providing a modest competitive advantage.

- Real-time communications - If you’re a gambling or auction site, or your application otherwise requires real-time communications, you absolutely need to deliver low latency.

Now that we know the categories, let’s look at the most efficient way to deliver the needed level of low latency.

2/3 Nice to Have Low Latency

The number 2 shows that Apple HLS and MPEG-DASH deployed using their default settings results in about 30 seconds of latency. The numbers are simple; if you use ten-second segment sizes and require three segments to be in the player buffer before playback starts, you’re at thirty seconds. In truth, Apple changed their requirements from ten seconds to six seconds a few years ago, and most DASH producers use 4-6 second segments, so the default number is really closer to 20 seconds.

Are you a pro? Subscribe to our newsletter

Sign up to the TechRadar Pro newsletter to get all the top news, opinion, features and guidance your business needs to succeed!

Still, number 3, HLS Tuned and DASH Tuned, shows latency of around 6-8 seconds. In essence, tuning means changing from 10-second segments to 2-second segments which, applying the same math, delivers the 6-8 seconds of latency. So, when latency is nice to have, you can cut latency dramatically with no development time or cost, or significantly increased deliverability risk.

4. Competitive Advantage - Low-Latency HTTP Technologies

When low-latency is needed as a competitive advantage, just cutting segment sizes won’t do it; you’ll have to implement a true low-latency technology. Here, there are two classes; HTTP technologies like Low-Latency HLS and Low-Latency CMAF for DASH, and solutions based upon other technologies, like WebSockets and WebRTC.

For most producers with applications in this class, low-latency HTTP technologies offer the best mix of affordability, backwards compatibility, flexibility, and feature-set. So, I’ll cover Low Latency HLS and Low-Latency CMAF for DASH in this section, and other technologies in the next.

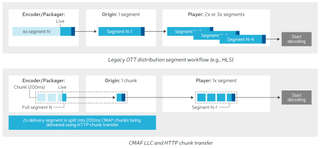

All HLS/DASH/CMAF-based low latency systems work the same basic way, as shown in Figure 2. That is, rather than waiting until a complete segment is encoded, which typically takes between 6-10 seconds (top of Figure 2), the encoder creates much shorter chunks that are transferred to the CDN as soon as they are complete (bottom of Figure 2).

As an example, if your encoder was producing six-second segments, you’d have at least six seconds of latency; triple that if the normal three segments were required to be received by the viewer before playback begins. If your encoder pushed out chunks every 200 milliseconds, however, and the player was configured to start playback immediately, latency should be much, much less. One key benefit of this schema is backwards compatibility; since segments are still being created, players not compatible with the low-latency schema should still be able to play the segments, albeit with longer latency. These segments are also instantly available for VOD presentations from the live stream.

Beyond these advantages, low-latency HTTP technologies support most of the features of their normal latency siblings, including encryption, advertising insertion, and subtitling, which aren’t universally support in WebRTC and WebSockets-based technologies. You can likely implement your selected low-latency technology using your existing player and delivery infrastructure, minimizing development and other technology costs.

Choosing an HTTP Low Latency Technology

There are two major standards for HTTP Adaptive Streaming, HLS and DASH, plus a unifying container format, CMAF. Once Apple announced its Low Latency HLS solution, it instantly displaced several grassroots efforts and became the technology of choice for delivering low latency to HLS. Though the spec is still relatively new, it’s already supported by technology providers like Wowza and WMSPanel with their Nimble Streamer product.

There is a DVB standard for low-latency DASH and the DASH Industry Forum has approved several Low-Latency Modes for DASH to be included in their next Interoperability guidelines. Pursuant to all these specifications, encoder and player developers and content delivery networks have been working for several years to ensure interoperability so that all DASH/CMAF low-latency applications should hit the ground running.

As an example, Harmonic and Akamai together demonstrated low latency CMAF as far back as NAB and IBC 2017, showing live OTT delivery with a latency under five seconds. Since then, Harmonic has integrated low latency DASH functionality into their appliance-based products (Packager XOS and Electra XOS) and SaaS solutions (VOS Cluster and VOS260). Many other encoder and player vendors have done the same.

Whether you choose to implement Low Latency HLS or Low Latency for DASH, or both, the transition from your existing HLS and/or DASH delivery architecture should be relatively seamless and inexpensive.

5. Real-Time Communications

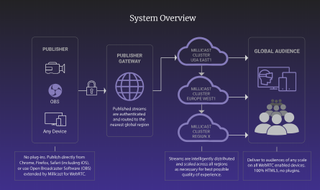

WebRTC is typically the engine for an integrated package that includes the encoder, player, and delivery infrastructure. Examples of WebRTC-based large scale streaming solutions include Real Time from Phenix, Limelight Realtime Streaming, and Millicast from CoSMo Software and Influxis (Figure 3). You can also access WebRTC technology in tools like the Wowza Streaming Engine, CoSMO Software, and Red 5 Pro Server. Latency times for technologies in this class range from .5 seconds for 71% of the streams (Phenix), under 500 milliseconds (Red5 Pro), to under one-second (Limelight). If you need sub-two second latency, WebRTC is an option you need to consider.

If you need real-time communications, you’ll likely need to implement either a WebRTC or Websockets-based solution. WebRTC was formulated for browser-to-browser communications and is supported by all major desktop browsers, on Android, iOS, Chrome OS, Firefox OS, Tizen 3.0, and BlackBerry 10, so it should run without downloads on any of these platforms. As the name suggests, WebRTC is a protocol for delivering live streams to each viewer, either peer-to-peer or server to peer.

WebSockets is a real-time protocol that creates and maintains a persistent connection between a server and client that either party can use to transmit data. This connection can be used to support both video delivery and other communications so are convenient if your application needs interactivity. Like WebRTC implementations, services that use WebSockets are typically offered as a service that includes player and CDN, and you can use any encoder that can transport streams to the server via RTMP or WebRTC. Examples include Nanocosmos’ nanoStream Cloud and Wowza’s Streaming Cloud With Ultra Low Latency. Wowza claims sub-3 second latency for their solution while Nanocosmos claims around one second, glass to glass.

Other Low Latency Technologies

There is a fourth category of products best called “other” that use different technologies to provide low latency. This category includes THEO Technologies High Efficiency Streaming Protocol (HESP), a proprietary HTTP adaptive streaming protocol that according to THEO delivers sub 100-millisecond latency while reducing bandwidth by about 10% as compared to other low-latency technologies. HESP uses standard encoders and CDNs but requires custom support in the packager and player (Figure 4). You can read more about HESP in a white paper available for download, here.

Here are a list of questions you should consider when deciding which low-latency technology to implement and how to do so.

Build or Buy?

If you implement the technology yourself, be sure to answer all the questions listed below before choosing a technology. If you’re choosing a service provider, use them to compare the different service providers.

Are you choosing a standard or a partner?

We’ve outlined the different technologies for achieving low latency above, but implementations vary from service provider to service provider. So, not all LL HLS implementation will incorporate ABR delivery, at least not at first. Most traditional broadcast-like applications will likely migrate towards HTTP-based standards like low latency HLS/DASH/CMAF while applications requiring ultra-low latency like betting and auctions will gravitate towards the other technologies. In either case, when choosing a service provider it’s more important to determine if they can meet your technological and business goals than which technology they actually implement.

Can it scale and at what cost?

One of the advantages of HTTP-based technologies is that they scale at standard pricing using most CDNs. In contrast, most WebRTC and WebSocket-based technologies require a dedicated delivery infrastructure implemented by the service provider. For this reason, non-HTTP-based services can be more expensive to deliver than HLS/DASH/CMAF. When comparing service providers, ascertain the soup to nuts cost of the event, including ingress, transcoding, bandwidth, VOD file creation, one-time or per-event configurations, and the like.

Implementation-related issues?

Ask the following questions related to implementing and using the technology.

- What’s the latency achievable at a scale relevant to your audience size and geographic distribution?

- Are there any quality limitations - some technologies may be limited to certain maximum resolutions or H.264 profiles.

- Will the packets pass through a firewall? HTTP-based systems use HTTP protocols, which are firewall-friendly. Others use UDP, which may not pass through firewalls automatically. If UDP, are there any backchannels to deliver to blocked viewers?

- What platforms are supported? Presumably computers and mobile devices, but what about STBs, dongles, OTT devices, and smart TVs?

- Can the system scale to meet your target viewer numbers? Is the CDN infrastructure private, and if so, can it deliver to all relevant viewers in all relevant markets? What are the anticipated costs of scaling?

- Can you use your own player or do you have to use the system’s player? If your own, what changes are required and how much will that cost?

- What’s needed for mobile playback? Will the streams play in a browser or is an app required? If there’s an app required (or desirable) are SDKs available?

- Which encoders can the system use? Most should use any encoder that can support RTMP connections into the cloud transcoder, but check if other protocols are needed.

- Can the content be reused for VOD or will re-encoding be required? Not a huge deal since it costs about $20/hour of video to transcode to a reasonable encoding ladder but expensive for frequent broadcasts.

- What are the redundancy options and what are the costs? For mission-critical broadcasts, you’ll want to know how to duplicate the encoding/broadcast workflow should any system component go down during the event. Is this redundancy supported and what is the cost?

What features are available and at what scale?

There will be a wide variety of feature offerings from the different vendors, which may or may not include:

- ABR streaming - how many streams and are there any relevant bitrate or resolution limitations?

- What about DVR features? Can the viewers stop and restart playback without losing any content.

- Video synchronization - Can the system synchronize all viewers to the same point in the stream? Streams can drift over locations and devices, and without this capability, users on some connections may have an advantage for auctions or gambling.

- What content protection is available? If you’re a premium content producer you may need true DRM. Others can get by with authentication or other similar techniques.

- Are captions available? Captions are legally required for some broadcasts but generally beneficial for all.

- What about advertising insertion or other monetization schema? Does the technology/service provider support this?

In general, if you’re a streaming shop seeking to meet or beat broadcast times in the 5 to 6 second range, a standards-based HTTP technology is probably your best bet, since it will come closest to supporting the same feature set you’re currently using, like content protection, captions, and monetization. If you have an application that requires much lower latency you’ll probably need a WebRTC or Websockets-based solution, or a proprietary HTTP technology. In either case, asking the questions listed above should help you identify the technology and/or service provider that best meets your needs.