Blue Note Entertainment Group may be known for its iconic, often vintage jazz clubs, but for more than a year, it has been looking to the future, collaborating with Audinate to explore potential uses for the latter’s Dante AV networking technology. The mission is a familiar one: to help musicians in different locations play together in real-time via the Internet.

There are already a handful of solutions available for that task, but the Blue Note/Audinate collaboration, takes things up a notch by including video, too. A musician’s subtle head nod that says “take another 12 bars” or an encouraging grin caused by the resulting solo are visual cues that can make or break a live performance, so bringing video to the table is a considerable challenge—but one worth taking on.

In late June, the collaborators held closed-door performances at Sony Hall in New York City. With vocals and guitars in New York, electric bass at Washington, D.C.’s Howard Theater, and drums and piano at S.I.R. Production Studios in Nashville, the performances were proofs of concept that worked not only technically but artistically as well.

The performances—and the collaboration— were spearheaded by project leaders Amit Peleg of integration firm Peltrix working as director, technical manager; Glenn Dickins of Audinate R&D, overseeing the necessary Dante networking; and Patrick Killianey, senior technical training manager at Audinate, acting as coordinator and advisor. The project first began to take shape in May, 2020, when Peleg, working with Blue Note, and Killianey began discussing what might be possible for remote performers.

The opportunity emerged in September, 2020, when Blue Note, which owns Sony Hall, let the project turn the venue, shuttered for the pandemic, into a lab. Soon real-time audio performances of musicians in isolated rooms around the venue were recorded, using artificial delays to simulate long distances between them. Video wasn’t part of the picture, as Dante AV was still under development at the time, but Killianey recalls, “When I started testing the latency that Dante AV provided, I asked Amit, ‘Do you want to try to do long-distance video in real-time?’” Soon video became a requirement, paving the way for a return to Sony Hall in June, 2021.

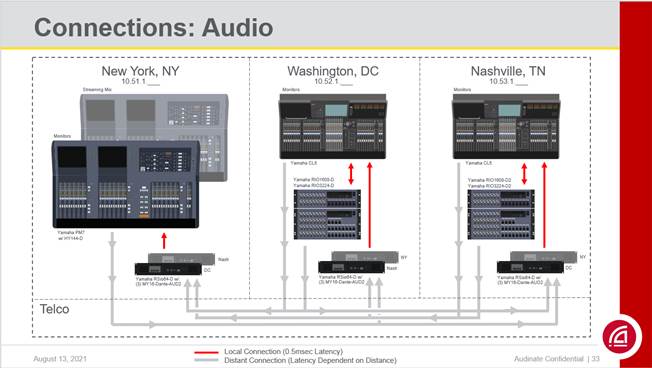

For the June performances, each location had its own monitor engineer mixing on a Dante-enabled Yamaha console for the musicians (two PM7s in NYC—one for monitors, the other for the streaming mix—and single CL5s at the other sites). Each console was tied locally into pairs of RSio64-D interfaces, each with three MY16- Dante-AUD2 cards.

“When we made a distant connection, we could transmit directly from our Dante devices,” said Killianey. “However, because we needed custom latency settings from each destination, we established Yamaha RSio64s and loaded them up with Dante cards. One Dante port managed the distance latency—whatever that needed to be— then the other Dante port repeated the signal to the local network at 0.5ms for consoles, recording, intercoms and so on, allowing us to split the signal easily. Each location had two RSio64Ds so we could maintain different latencies for each destination.”

Video, tackled with Bolin D220 Dante AV cameras and Patton FPX6000 Dante AV encoders and decoders, ran at 1080P/30FPS, with some additional onstage side video screens for performers running 720P/60FPS.

Ultimately everything was able to pass easily down a gigabit connection. The most important aspects learned from the long-distance performances involved finding appropriate latencies that worked equally well for systems and musicians. “Dante audio links like this could be made by anyone, today, but video required support from R&D,“ said Killianey. “Our goal now is to pack our video firmware with the new features we created so techs in the field can do it all.”