In May 2001, a construction crew demolished the old Gates Planetarium dome at the Denver Museum of Nature and Science, leaving only a structural framework of columns. The crew tore out the theater-in-the-round seating, removed the 70-odd projectors from the planetarium’s perimeter, and dismantled the Minolta star projector.

For anyone who has ever been to a planetarium as a child, the star projector was as much part of the experience as the stars that bloomed out of the night sky overhead. Now that fascinating, improbable machine in the middle of the dome’s floor has been made obsolete by digital technology and the space theater experience.

There are more than 1,000 planetariums in the United States, but the Gates Planetarium is one of a handful — the most prominent of which is the Hayden Planetarium at Rose Center for Earth and Space in New York City’s American Museum of Natural History — whose presentations are based around high-resolution, real-time graphics. (For more about the Hayden Planetarium, see the May 2002 issue.) A comparison between the supercomputer-driven presentations at the Gates and the old planetarium projectors makes the point. Mechanical planetariums can display 12,000 stars, and the Gates system can generate as many as 5 billion stars of statistically correct appearance for each presentation.

“In the old planetarium, we adapted the script according to the technology available,” says Daniel Neafus, former planetarium producer and now digital media coordinator. “In the current planetarium, there are no mechanical or technical boundaries.”

Projection, seating orientation, and sound: all contribute to a virtual reality, immersive space theater experience that takes viewers out of their seats and into the cosmos.

THE VIRTUAL EXPERIENCE

Over the nearly four years of the project — which encompassed contributions from dozens of consultants and museum committees as well as the constant advances in video and A/V-control technology — the central vision for the planetarium remained intact. “We wanted this to be an Omnimax, film-style environment,” says Neafus. “What was decidedly different from the film-style environment and the repeatability of that kind of experience were the flexibility and sustainability that we needed.” This would not be a system where a film would be swapped out a couple of times a year. Early on the Gates design team decided on a virtual-reality type of system, one that would allow (if needed) teaching interactivity between a presenter and the audience and that could be custom programmed on-site.

“Keeping the programming scientifically accurate and fresh was the whole underpinning to the project,” says Neafus.

The Gates computer-based virtual-reality model lets the system duplicate anything that could be done in a traditional planetarium system in real time. But it also allows almost anything that presenters can imagine. “The virtual-reality capabilities allow us to build a show in multiple passes,” says Neafus. “Each final scene is saved as a movie file. The movie files are then stored in 11 QuBit DS digital video recorders and played back over and over.

“The commitment to being all digital means that you don’t have to upgrade the hardware constantly,” says Neafus. “The technology allows us to focus on the content.” NASA uses digital technology, so images from the Hubble space telescope can be used in the Gates Planetarium without reformatting them for a different technology, ensuring a constant stream of new images and data available for use in shows and educational presentations.

14 SHOWS A DAY

Seven days a week, 14 times a day, the 120-seat Gates theater transports viewers out into space and brings them back within a half hour. The premiere show is completely automated, and the venue uses multichannel video (QuBit digital servers, Silicon Graphics supercomputers, Barco projectors, SEOS image blending), lighting (Strand, Martin, High End Systems), and surround sound (Lake Technology processing, MediaMatrix, Meyer Sound loudspeakers). All planetarium systems are under Crestron control (see the sidebar “Controlling the Cosmos.”).

The system has extensive scheduling installed so management can set up automated warm-up and cooldown periods, varied by day of the week. The system wakes up at around 9 a.m. and brings the major systems online, at which time it sits and waits for the first show schedule to begin. Everything is in standby mode. The operators have different levels of panel access controlled by passwords, and maintenance workers at night can control only the lights. Ushers and operators can start, stop, reset, restart, and control the predetermined sequence, which allows for flexibility and deals with the ebb and flow of crowd control. Technical staff has additional layers of specific controls, allowing for actual designing of sequenced presentations, lighting and sound controls, and show timer adjustment.

Additionally, pressing a single button — which is present on every page — brings up an emergency menu. On that menu, an operator can control emergency lights, stop the show, and send e-mails and messages to pagers, thus linking the operator in the dome with the outside world.

“It all sounds simple,” says Neafus. “But it took quite a bit of fine-tuning to get it all to work smoothly, and we’re still fine-tuning.”

As many as 20 people run the planetarium, rotating through the week — taking turns as ushers, presenters, ticket takers, and so on — in four-hour blocks. But the show itself is run by one person, who is definitely not a person with a pointer.

“I’m a big believer in a facilitated presentation, which we do for special events,” says Neafus. “But from the start, our model for the planetarium show was that it would be like a movie, one that runs every half hour. We made operations as efficient as possible so that we don’t always need to have highly trained presenters.”

DECISIONS, DECISIONS

Early on in the project, the internal project team made an important decision. Rather than place the entire project in the hands of one vendor, it decided to work with and coordinate among multiple vendors. The architect (HHPA) hired two consulting firms that would make critical, early decisions regarding electrical and infrastructure systems: Ford Audio Video and Auerbach and Associates (now Auerbach Pollock Friedlander).

Two years ago, when construction was well underway, the project team began re-evaluating some of the technology choices made earlier in light of new products that have since become available. “We had to address some conflicts between what the architects had planned and what we were moving toward,” says Neafus. “The holes where the projectors would go, for instance — we had to have those modified after the walls were already up.” With such complexities resolved, the individual system components began dropping into place so that by June 2002, the project team had a working space.

What it did not have, says Neafus, was the second half of the technology — the supercomputers, the projectors, an additional eight loudspeakers (beyond the original Ford Audio Video specification), and the computerized lighting system — elements the museum team had taken upon itself to deliver.

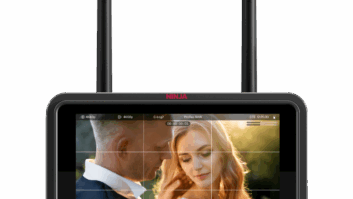

At that point, Jim Pope of Denver’s Professional Videographics was contracted to tie all of the systems and system components together under a single control system. Neafus decided to go with Crestron control at the 2002 NSCA Expo in Denver. “I was impressed that Crestron was so visibly and aggressively pursuing the new technologies, and with its 12-inch touch panel, I found what I wanted our operators to use — something that was big and sharp and could carry multimedia.”

Neafus, who’d come across Pope’s name associated with some impressive projects, saw his name on Crestron’s Web site, listed on the Crestron Authorized Independent Programmer (CAIP) page. “When we spoke, it was obvious that Jim had a clear interest in the public museum space,” Neafus says. “He was also quite honest about pushing the envelope on this project. He admitted that he’d only done about two-thirds of what we’d be asking him to do with the Crestron system previously. Consequently, he asked for some flexibility in our deadlines. That allowed us to fine-tune the system as we got each part functioning.”

Pope in turn consulted with Chris Jensen, another CAIP. “It was the old story of two heads being better than one,” says Pope. “Chris and I compete on some levels but cooperate on many others. In this case, Chris has helped me behind the scenes by being a sounding board on some of the internal module development.”

By February 2003, the majority of the systems were in place.

ALL AROUND SOUND

Audio in a domed environment has a strong vertical element, and Neafus wanted to emphasize that vertical motion in the sound system.

The original array suggested by Cerebrate and Associates consisted of eight loudspeakers (as well as four subs): six around, one high, and one center-front for narration. (Auerbach consulted with the Rose Center on the Hayden Planetarium on lighting, sound, staging, and technical system design.)

“As our thinking moved further into audio virtual reality,” says Neafus, “Auerbach expressed some concern about what playback system we’d be using and how we would go about mixing if we added many more speakers to the system.”

At SIGGRAPH 2001 Neafus was particularly encouraged by what he saw from Lake Technologies, which was set up in the Silicon Graphics booth. “Here was a multichannel, controllable system that could be tied into the real-time computer. Anything was possible now: speaker placement, motion of the sound, and tracking it in the real-time environment. At this point, we completely reevaluated the sound system that was planned.”

Further research on sound in the virtual-reality environment revealed some strikingly simple recommendations, says Neafus, one of which was that the speakers themselves be of the highest quality possible and the other that they be symmetrical spaced, whatever the numbers. With that in mind, the project team went on to complete the original contract for the 8.4 speaker array and then augment that with eight more Meyer Sound loudspeakers.

The current loudspeaker array in the 56-foot-diameter hemisphere consists of three Meyer Sound CQ1s (L/C/R), five UPA1s (surround and narrator), eight UPA1s (surround), four Bag End S18E1 subwoofers with two stereo ELF1 processors, and Crown K2 amplifiers.

Audio signal is sourced from the Onyx supercomputer and fed to the Huron20 (HUR20VR) 16-channel 2-by-8 ADAT optical surround-sound processing system from Lake Technology that syncs with the SGI imaging in real time via serial/IP connection. The Huron20 feeds signal to the MediaMatrix MM208NTCN mainframe (with three BOBs), which distributes it to the subs and the Meyer Sound loudspeakers.

…AND THEN THERE WAS LIGHT

The SGI ONYX 3000 creates 11 simultaneous video signals. This 11-pipe, 1,024-by-1,280 signal can be recorded by the QuBit DDR array or pass directly through to the Barco projectors. The Crestron system turns the Barco projectors on and off; it talks via RS-232 to the QuBit DDR and SEOS Mercator/Odyssey box. The SEOS Mercator is a PC-based distortion correction component. Sitting between the image generator and the display device, the Mercator digitizes the video stream at full 24-bit color depth, warps the image into a seamless all-dome image, and delivers it to the display. The independence of the image source and the display device provides maximum system flexibility.

The signal is passed from the Mercator on to the Barcos through DVI. The Crestron system initiates either the SGI (for special demonstrations) or the time-code-based DDR QuBit (for daily show playback). At the end of each program, the Crestron resets the system and stands by to repeat the performance.

Basically, the 11-pipe ONYX and the Barco projectors combine to create the world’s largest (120 seats) public, high-resolution, virtual-reality theater.

Lighting for the planetarium is based around a system developed by the museum team: a fiber-optic cove illumination system comprising six Martin FiberSource CMY light sources and automated spotlights (two High End Systems Technobeams and two Martin MAC 2000s). “This system allows for zones of unlimited colors to wash up the dome from the perimeter and truly sets a mood and defines the space,” says Neafus. The control system includes a lighting page for easy and accurate recall of automated color presets; precise focus and intensity control are required for tight illumination of the stage while images are on the dome. Automated presets are called up with just a tap on the screen, says Neafus.

BACK TO THE FUTURE

The success of the premiere show, A Cosmic Journey, and real-time demonstrations have convinced Neafus that he can add more functionality to the planetarium system. Those successes include the operators’ complete ease using the system (helped in part by replacing a huge control console, common to most planetarium systems, with a single touch panel), fast problem solving via Crestron-communicated help alerts, and the overall stability of the system.

In addition, the system is expandable and upgradeable. Because the system and system control are based on industry standards, trained staff and outside A/V consultants can perform programming updates.

The most recent update was 12, 12 QuBIT/QVIS DDR players under Crestron control, which were added to the facility in October 2003. Other scheduled adds include wireless control that will allow the presenter to roam the audience; a presenter touch panel onstage; presenter buttons for calling up scenes by audience request; special presentations that will allow visitors to enter destinations and fly the planetarium; and sophisticated scientific lectures using virtual-reality PowerPoint in a 3-D environment.

The Gates Planetarium, if not a work-in-progress, is certainly a work that will grow progressively more sophisticated and versatile over time. It will not be “finished” soon — and for the planetarium team, that’s just fine.

Charles Conteheads Big Media Circus, a marketing communications company. He can be reached at[email protected].

The Denver Museum of Nature and Science

Founded in 1900, the Denver Museum of Nature and Science (www.dmns.org) is the Rocky Mountain region’s leading resource for informal science education, serving a membership base of more than 42,000 households and providing science education to more than 1.6 million people. At more than 500,000 square feet, the museum houses 15 permanent exhibits, including Space Odyssey, the IMAX Theater, and the Gates Planetarium.

The new Gates Planetarium, which opened on June 13, 2003, is part of a $50 million investment in permanent exhibits and technology that includes a 13,000-square-foot Space Odyssey exhibit, a 2,000-square-foot El Pomar Space Education Center, a 5,070-square-foot Sky Terrace, and the 18,460-square foot Leprino Family Atrium.

Controlling the Cosmos

When Dan Neafus first contacted me to discuss this installation, my fingertips began to twitch. The project was definitely high up on the coolness scale, and I have always had a soft spot for astronomy, having met my wife of 30 years during a planetarium visit.

I had called Chris Jensen, a fellow Crestron authorized independent programmer, and we arranged a meeting with Neafus to begin to scope out the concepts. After that initial meeting, Jensen and I decided that I would take a lead role in the project, with Jensen assuming a supporting role as needed.

The project has taken roughly a year to complete in three major phases. The first phase was complete in January 2003 and established control over the major dome components. These complex systems needed to be controlled in a simple way. Through the use of Crestron’s E-Control methodologies, I was able to create the world’s most expensive VCR — an SGI ONYX supercomputer feeding 11 simultaneous video feeds to the edge-blending system and on to the projectors.

Working with SGI visualization programmer Nigel Jenkins (Nebulus Designs) on the SGI, we had TCP/IP communications up and running with a combination of a random access and a traditional VCR (or DVD) user interface in about two hours, complete with autorenegotiation and linkage monitoring. From there the lighting system, sound system, and projection were brought under control. Where in a normal venue one or two projectors might be turned on, in this one, 11 needed to be fired up. They needed to be monitored because the lamps must be kept running in a coordinated manner to prevent one area of the sky from being darker than the other. The lighting and sound were similar to any large auditorium and did not present much in the way of a challenge from the initial control perspective.

Phase two aimed to bring these subsystems under automatic (scripted and timed) control, reducing the user interface to a single button concept. (This actually turned out to be about a dozen buttons, with the largest one saying, “It’s showtime!”) A series of countdown timers was needed for systems that triggered numerous events. Each event timer — Showtime, Preshow (lobby demonstration tape), and Flush/Fill (audience movement) — needed to have a manual override option and then would need to resync back to the automated sequence later.

The initial show control portion of the system was complete by June 2003. Matt Brownell and the museum staff then subjected it to rigorous testing. One rewrite in July 2003 addressed the realities of audience movement, thus completing the foundation for all future shows.

The final phase is the evolution of the system as new systems, shows, and uses for this venue are developed.

— Jim Pope, Professional Videographics

For More Information

Bag End

www.bagend.com

Barco

www.barco.com

Crestron Electronics

www.crestron.com

Crown Audio

www.crownaudio.com

High End Systems

www.highend.com

Lake Technology

www.lake.com.au

Martin

www.martin.com

MediaMatrix

http://mediamatrix.peavey.com

Meyer Sound

www.meyersound.com

Oxmoor

www.oxmoor.com

QuBit

www.qubit.com

SEOS Displays

www.seos.com

Silicon Graphics

www.sgi.com

Strand Lighting

www.strandlight.com